ADSP-21160M/ADSP-21160N

Data Register File

ADSP-21160X

A general-purpose data register file is contained in each pro-

cessing element. The register files transfer data between the

computation units and the data buses, and store intermediate

results. These 10-port, 32-register (16 primary, 16 secondary)

register files, combined with the ADSP-2116x enhanced

Harvard architecture, allow unconstrained data flow between

computation units and internal memory. The registers in PEX

are referred to as R0–R15 and in PEY as S0–S15.

CLOCK

CLKIN

CS

BMS

BOOT

EPROM

(OPTIONAL)

4

CLK_CFG3–0

EBOOT

ADDR

DATA

CIF

LBOOT

BRST

3

IRQ2–0

ADDR31–0

ADDR

4

FLAG3–0

MEMORY/

MAPPED

DEVICES

DATA63–0

DATA

OE

TIMEXP

RDx

LINK

DEVICES

(6 MAX)

LXCLK

Single-Cycle Fetch of Instruction and Four Operands

WE

WRx

ACK

(OPTIONAL)

LXACK

ACK

CS

The processor features an enhanced Harvard architecture in

which the data memory (DM) bus transfers data, and the pro-

gram memory (PM) bus transfers both instructions and data

(see the functional block diagram 1). With the ADSP-21160x

DSP’s separate program and data memory buses and on-chip

instruction cache, the processor can simultaneously fetch four

operands and an instruction (from the cache), all in a single

cycle.

(OPTIONAL)

LXDAT7–0

MS3–0

TCLK0

RCLK0

TFS0

RSF0

DT0

PAGE

SERIAL

DEVICE

(OPTIONAL)

DMA DEVICE

(OPTIONAL)

SBTS

DATA

CLKOUT

DR0

DMAR1–2

DMAG1–2

CS

TCLK1

RCLK1

TFS1

RSF1

DT1

SERIAL

DEVICE

(OPTIONAL)

HOST

Instruction Cache

HBR

HBG

PROCESSOR

INTERFACE

(OPTIONAL)

The ADSP-21160x includes an on-chip instruction cache that

enables three-bus operation for fetching an instruction and four

data values. The cache is selective—only the instructions whose

fetches conflict with PM bus data accesses are cached. This

cache allows full-speed execution of core, providing looped

operations, such as digital filter multiply- accumulates and FFT

butterfly processing.

DR1

REDY

BR1–6

PA

RPBA

ID2–0

ADDR

DATA

RESET JTAG

6

Data Address Generators with Hardware Circular Buffers

Figure 2. Single-Processor System

The ADSP-21160x DSP’s two data address generators (DAGs)

are used for indirect addressing and provide for implementing

circular data buffers in hardware. Circular buffers allow efficient

programming of delay lines and other data structures required

in digital signal processing, and are commonly used in digital

filters and Fourier transforms. The two DAGs of the product

contain sufficient registers to allow the creation of up to 32 cir-

cular buffers (16 primary register sets, 16 secondary). The DAGs

automatically handle address pointer wraparound, reducing

overhead, increasing performance, and simplifying implemen-

tation. Circular buffers can start and end at any memory

location.

enabled, the same instruction is executed in both processing ele-

ments, but each processing element operates on different data.

This architecture is efficient at executing math-intensive DSP

algorithms.

Entering SIMD mode also has an effect on the way data is trans-

ferred between memory and the processing elements. In SIMD

mode, twice the data bandwidth is required to sustain computa-

tional operation in the processing elements. Because of this

requirement, entering SIMD mode also doubles the bandwidth

between memory and the processing elements. When using the

DAGs to transfer data in SIMD mode, two data values are trans-

ferred with each access of memory or the register file.

Flexible Instruction Set

The 48-bit instruction word accommodates a variety of parallel

operations for concise programming. For example, the proces-

sor can conditionally execute a multiply, an add, and subtract,

in both processing elements, while branching, all in a single

instruction.

Independent, Parallel Computation Units

Within each processing element is a set of computational units.

The computational units consist of an arithmetic/logic unit

(ALU), multiplier, and shifter. These units perform single-cycle

instructions. The three units within each processing element are

arranged in parallel, maximizing computational throughput.

Single multifunction instructions execute parallel ALU and

multiplier operations. In SIMD mode, the parallel ALU and

multiplier operations occur in both processing elements. These

computation units support IEEE 32-bit single-precision float-

ing-point, 40-bit extended-precision floating-point, and 32-bit

fixed-point data formats.

Rev. C

|

Page 5 of 60

|

February 2013

STM32F030C6芯片介绍:主要参数分析、引脚配置说明、功耗及封装

STM32F030C6芯片介绍:主要参数分析、引脚配置说明、功耗及封装

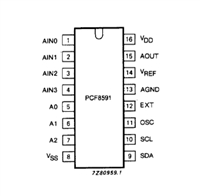

PCF8591数据手册解读:参数、引脚说明

PCF8591数据手册解读:参数、引脚说明

一文带你了解ss8050参数、引脚配置、应用指南

一文带你了解ss8050参数、引脚配置、应用指南

深入解析AD7606高性能多通道模数转换器:资料手册参数分析

深入解析AD7606高性能多通道模数转换器:资料手册参数分析